Introduction

The adoption of generative artificial intelligence in organizations has reached an unprecedented scale. According to the McKinsey Global Survey 2025, 78% of organizations use AI in at least one business function, while 65% regularly employ generative AI [1]. OpenAI reported reaching 1.5 million enterprise licenses in March 2025, a tenfold increase in a single year [2]. Pew Research Center reports that 28% of U.S. workers use ChatGPT for professional tasks [3].

Behind these figures lies a structural problem that most organizations have yet to address: the systematic management of prompts. If the prompt represents the primary interface between the human operator and the language model -- and the scientific literature has amply demonstrated that response quality depends directly on prompt quality [4][5] -- then the loss, duplication, and disorganization of prompts constitute a measurable and, above all, avoidable form of inefficiency.

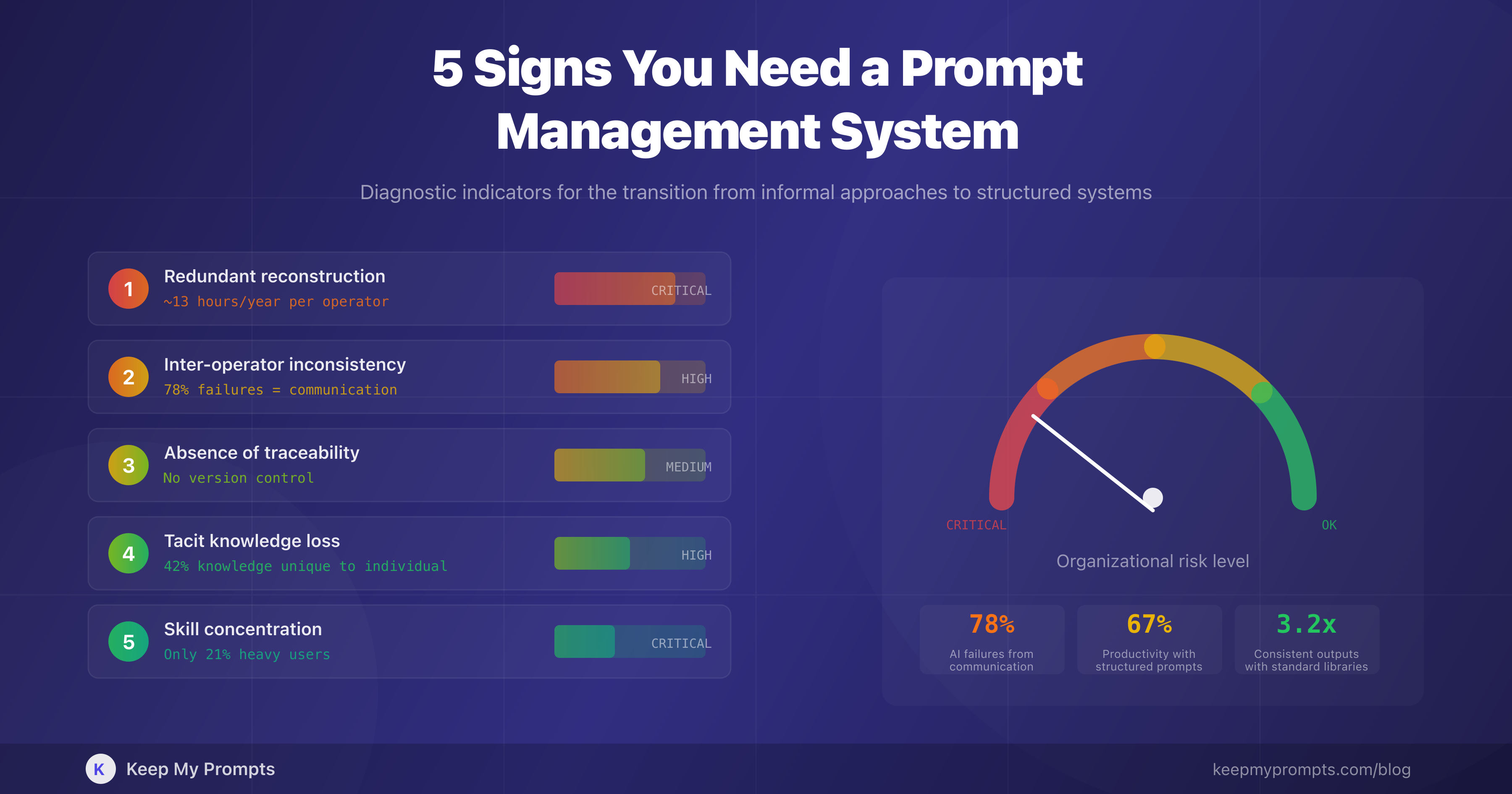

The present article identifies five diagnostic indicators that signal the need to transition from an informal approach to prompt management (text files, scattered notes, shared documents) to a structured and dedicated system. For each indicator, quantitative evidence is provided, underlying causes are analyzed, and evaluation criteria are proposed.

1. Rewriting Prompts That Were Already Created

1.1 The Phenomenon of Redundant Reconstruction

The first signal, and perhaps the most evident, is the recurring sensation of having already written a similar prompt in the past without being able to retrieve it. This phenomenon is not exclusive to prompts: research on knowledge management has extensively documented the cost of redundant information reconstruction.

According to a study conducted by Panopto, knowledge workers spend an average of 5.3 hours per week searching for information or reconstructing knowledge that already exists within the organization [6]. IDC estimated that knowledge workers spend approximately 4.5 hours per week searching for documents, and when they cannot find them, they spend the remaining time recreating what they failed to locate [7].

Applied to the context of prompts, the phenomenon takes a specific form: a professional who uses AI daily generates dozens of prompts, progressively refines their formulation, arrives at an effective version -- and then does not save it in a retrievable manner. The following day, or the following week, the process starts from zero.

1.2 Quantifying the Impact

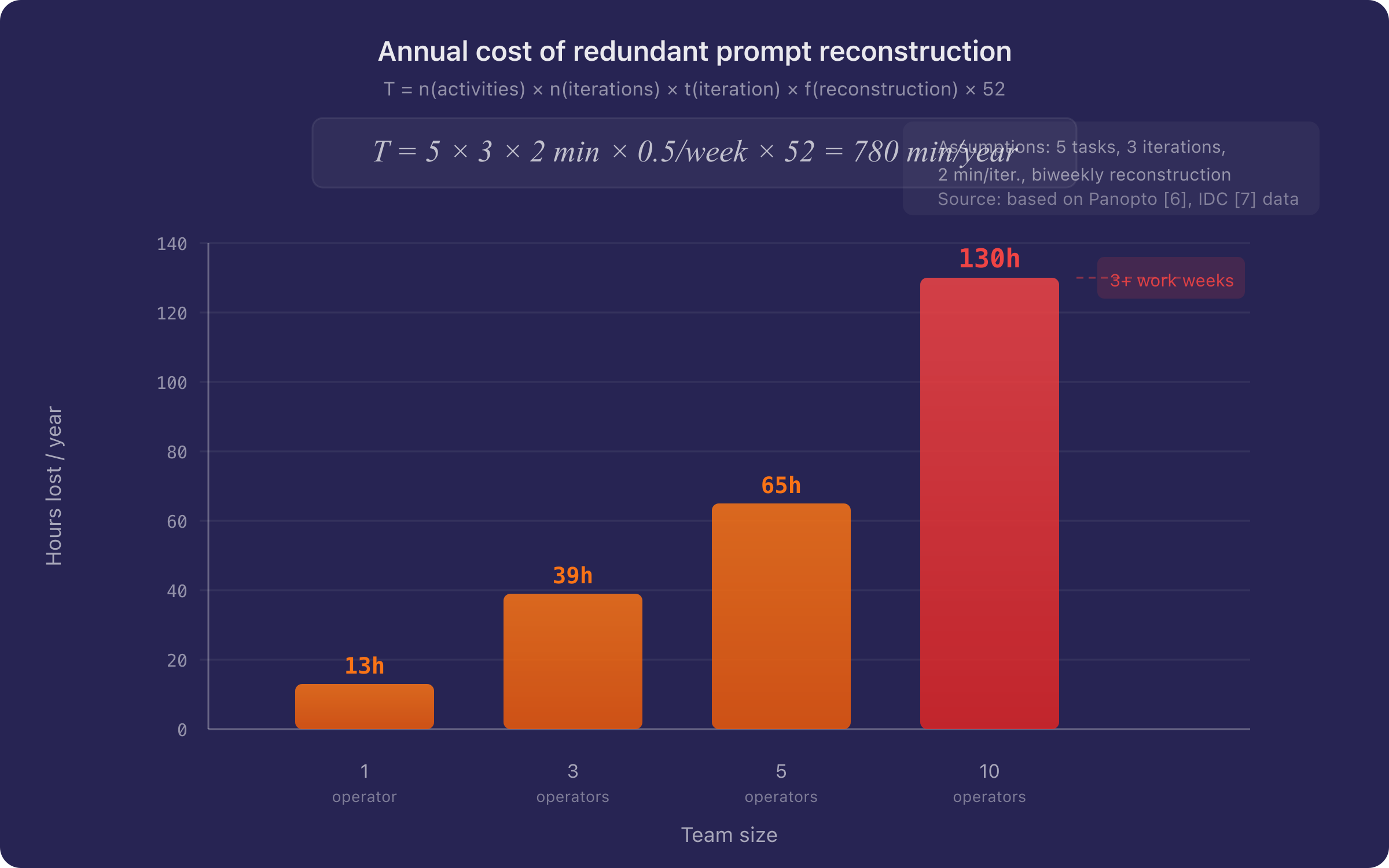

Consider a professional who uses AI for five recurring tasks (email drafting, data analysis, content creation, document review, brainstorming). If each task requires an average of 3 refinement iterations to reach the optimal prompt, and each iteration requires 2 minutes, the cost of a single optimization cycle is approximately 6 minutes. Multiplied by 5 tasks and a reconstruction frequency of even just once every two weeks, the annualized cost amounts to approximately 13 hours per individual operator.

where represents the weekly reconstruction frequency. For the stated values (, , min, /week):

In a team of 10 people, the aggregate cost exceeds 130 hours per year -- more than three standard work weeks.

1.3 Diagnostic Criterion

If a team member uses the expression "I had a perfect prompt for this, but I can't find it anymore" with a frequency greater than once per week, the signal is positive.

2. Output Inconsistency Across Sessions or Among Colleagues

2.1 Inter-Operator Variance

The second signal is the systematic variability in the quality of AI-generated outputs, both across different sessions by the same user and among colleagues performing the same task. This variance is not attributable to the model (the parameters are stable for a given version), but to the prompt.

Research reported by SQ Magazine indicates that 78% of failures in AI projects do not stem from technological limitations, but from inadequate communication between the human operator and the model [8]. This finding suggests that prompt quality is not a marginal factor, but the primary determinant of success or failure in AI usage.

2.2 The Standardization Problem

Organizations that adopt standardized prompt libraries obtain outputs 3.2 times more consistent than those that do not, with a first-attempt success rate that increases from 34% to 87% [8]. This datum is significant: it indicates that inter-operator variability is not an inherent problem of AI, but a process problem that can be resolved through standardization.

Consider the analogy with document templates: no structured organization allows each employee to create the format of their own presentations, reports, or official emails from scratch. Shared templates, stylistic guidelines, and predefined formats exist. AI prompts deserve the same treatment, as their quality has a direct and measurable impact on productive output.

2.3 Diagnostic Criterion

If two colleagues performing the same task produce qualitatively different outputs from AI, and the cause resides in the prompt formulation (not in the request itself), the signal is positive.

3. No Traceability of Prompt Evolution

3.1 The Value of Iteration

As documented in the previous article in this series [9], the iterative refinement of a prompt follows a diminishing returns curve: the first iterations produce marked improvements, while subsequent ones offer progressively smaller marginal gains. This implies that a well-calibrated prompt represents the result of a cumulative cognitive investment, and its loss is equivalent to the loss of that investment.

The problem is compounded when the refinement is not documented. Without a versioning system, it is impossible to:

- Trace back to the formulation that produced a given output

- Compare successive versions to understand which modification improved (or worsened) quality

- Share with colleagues not only the final prompt, but the optimization path

3.2 Analogy with Version Control in Software

The software industry solved an analogous problem decades ago with the introduction of version control systems (Git, SVN). No professional developer works without version control, as the cost of losing a working modification or being unable to perform a rollback is unacceptable. Prompts, as textual artifacts that determine productive outputs, deserve equivalent treatment.

The difference from software is that prompts are typically shorter and their lifecycle more rapid, which makes version control simpler to implement but no less necessary.

3.3 Diagnostic Criterion

If the question "which prompt did you use to generate that result?" does not have an immediate and verifiable answer, the signal is positive.

4. Tacit Knowledge Leaving the Organization

4.1 Prompts as Intellectual Capital

According to a study conducted by Panopto on 1,000 U.S. workers, 42% of institutional knowledge is unique to the individual and is not shared with colleagues [6]. When an employee leaves the organization, this knowledge disappears. Research published in Emerald Insight has documented how personnel turnover induces systematic knowledge loss, with replacement costs ranging from 50% to 200% of the departing employee's annual salary [10][11].

Effective prompts fully meet the definition of tacit knowledge: they are the result of personal experimentation, domain-specific understanding, and progressive calibration through trial and error. They are not documented in company manuals, they are not part of standard operating procedures, and they often reside exclusively in the chat history of the individual.

4.2 The Invisible Cost of Turnover

When a team member who has developed significant competencies in prompt formulation leaves the organization, their successor must rebuild from scratch not only domain knowledge, but the entire library of optimized prompts. Considering that it can take up to 2 years to bring a new hire to the level of their predecessor [12], and that this estimate does not include the specific time for prompt reconstruction, the overall cost is substantial.

A prompt management system transforms tacit knowledge into explicit, shared knowledge, reducing dependence on individuals and protecting the organization's intellectual capital.

4.3 Diagnostic Criterion

If the departure of a team member results in a perceptible reduction in the quality of AI-generated outputs, the signal is positive.

5. AI Usage Limited to a Few Informal "Experts"

5.1 Skill Polarization

The fifth signal is the concentration of prompting competencies in a few individuals within the team. McKinsey reports that while 90% of employees use generative AI, only 21% qualify as "heavy users" [1]. This figure suggests a strongly asymmetric skill distribution, with a minority achieving significant results and a majority using AI superficially.

Research published in Science by Dell'Acqua et al. [13] demonstrated that access to AI increases average productivity by 14%, but with significant variance: lower-performing workers improve by 43%, while already competent workers achieve marginal gains. This implies that the potential for improvement is greatest precisely for those who currently use AI least effectively -- and that a prompt sharing system could serve as a skill equalizer.

5.2 The Multiplier Effect of Sharing

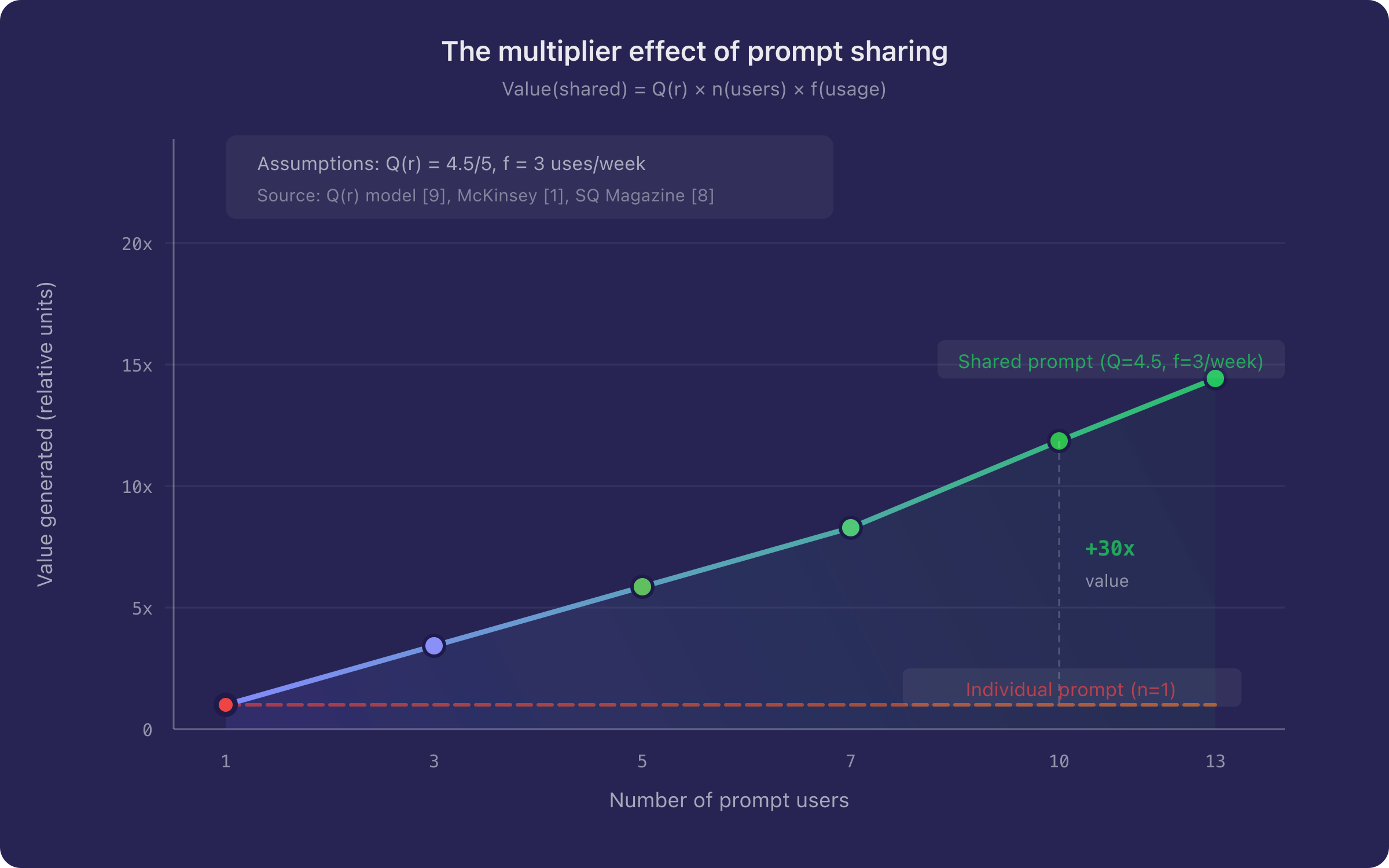

If an expert professional develops a prompt that produces excellent results for a given task, the value of that prompt multiplies linearly with the number of colleagues who can use it. In the absence of a management system, this multiplier remains equal to 1: the prompt stays confined to the individual's session.

Organizations that implement structured prompt frameworks report an average productivity improvement of 67% in AI-supported processes, while those that adopt informal approaches achieve minimal gains despite comparable technology investments [8].

where is the prompt quality, the number of users, and the usage frequency. A high-quality prompt () shared with 10 colleagues who use it 3 times per week generates a value 30 times greater than its individual use.

5.3 Diagnostic Criterion

If within the team there exists a person informally identified as "the one who knows how to use ChatGPT" to whom others turn for prompts, the signal is positive.

6. Discussion: From Shared Documents to a Dedicated System

6.1 The Inadequacy of Generic Solutions

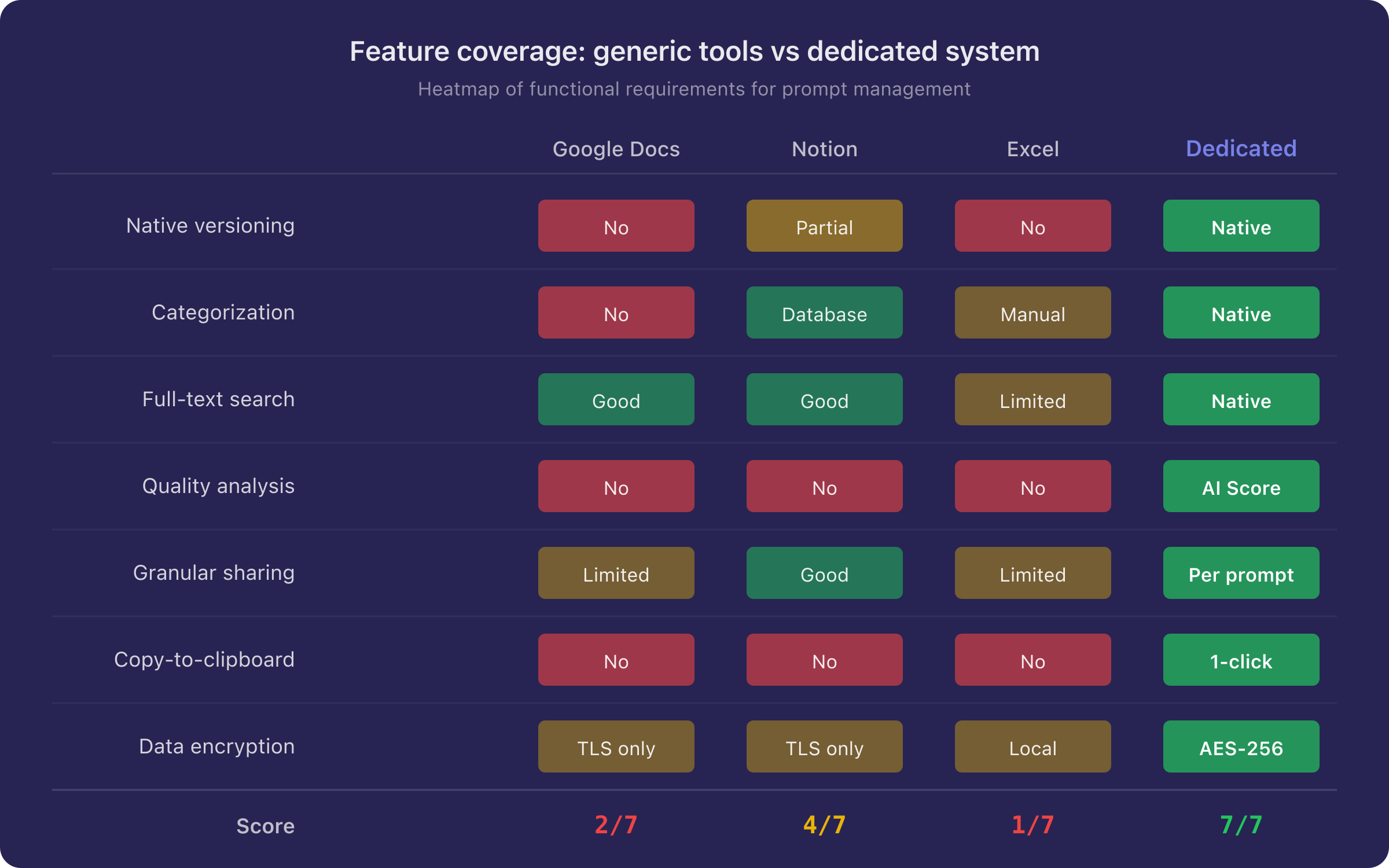

The instinctive response to the problems described above is typically the use of generic tools: a shared Google document, a Notion folder, a dedicated Slack channel, an Excel spreadsheet. These solutions, while representing a step forward from a total absence of management, present structural limitations:

| Limitation | Google Docs | Notion | Spreadsheet |

|---|---|---|---|

| Native prompt versioning | No | Partial | No |

| Structured categorization | No | Yes | Manual |

| Full-text content search | Yes | Yes | Limited |

| Prompt quality analysis | No | No | No |

| Granular sharing | Limited | Yes | Limited |

| Rapid copy-to-clipboard | No | No | No |

| Data structure optimized for prompts | No | No | No |

The fundamental problem is that a generic document was not designed to manage prompts. It lacks the appropriate data structure (title, content, category, tags, versions), it lacks the interface optimized for rapid use (one-click copy, category-based search), and it lacks the infrastructure for advanced features such as automatic versioning, quality scoring, and controlled sharing.

6.2 Characteristics of an Adequate System

Based on the analysis conducted, a prompt management system should satisfy the following functional requirements:

-

Persistence and retrievability: every saved prompt must be immediately retrievable through search, categorization, and tagging.

-

Versioning: the system must track the evolution of each prompt, enabling comparison between versions and rollback to previous formulations.

-

Controlled sharing: it must be possible to share individual prompts or libraries with colleagues or the public, while maintaining access control.

-

Quality assessment: the system should provide objective indicators of prompt quality, guiding the user toward more effective formulations.

-

Data portability: prompts must be exportable and importable, ensuring data ownership for the user.

-

Security: prompt contents, which may include sensitive information, must be protected by adequate encryption.

7. Operational Summary

The following table synthesizes the five diagnostic signals with their corresponding quantitative indicators and suggested corrective actions:

| # | Signal | Quantitative Indicator | Critical Threshold |

|---|---|---|---|

| 1 | Redundant reconstruction | Hours/year spent rewriting prompts | > 10 hours/year per operator |

| 2 | Inter-operator inconsistency | Qualitative output variance | First-attempt success rate < 50% |

| 3 | Absence of traceability | Non-reconstructible prompts | Question "which prompt did you use?" without answer |

| 4 | Tacit knowledge loss | Turnover impact on AI quality | Perceptible decline post-departure |

| 5 | Skill concentration | Expert-to-user ratio | < 20% heavy users in the team |

If three or more of these signals are present, the opportunity cost of not adopting a dedicated system most likely exceeds the cost of adoption itself.

8. Conclusion

Generative artificial intelligence is no longer an experimental technology: it is a daily production tool. Like every production tool, its effectiveness depends not only on the technology itself, but on the organizational infrastructure that supports its use. Prompts are the interface between human intention and the computational capacity of the model: treating them as temporary and volatile artifacts is equivalent to forfeiting a significant portion of the generatable value.

The data presented in this article indicate that organizations with structured approaches to prompt management achieve productivity improvements up to 67% greater than those with informal approaches [8], with a first-attempt success rate that increases from 34% to 87% [8]. In a context where 80% of organizations do not yet record a tangible impact of generative AI on EBIT [1], systematic prompt management represents a concrete and immediately actionable lever.

The question is not whether a prompt management system is needed, but how long one can afford not to have one.

References

[1] McKinsey & Company, "The State of AI: How Organizations Are Rewiring to Capture Value," McKinsey Global Survey, 2025. Available: https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

[2] Azumo, "AI in the Workplace Statistics," 2025. Available: https://azumo.com/artificial-intelligence/ai-insights/ai-in-workplace-statistics

[3] Pew Research Center, "34% of U.S. Adults Have Used ChatGPT," June 2025. Available: https://www.pewresearch.org/short-reads/2025/06/25/34-of-us-adults-have-used-chatgpt-about-double-the-share-in-2023/

[4] S. Zamfirescu-Pereira, R.Y. Wong, B. Hartmann, Q. Yang, "Why Johnny Can't Prompt: How Non-AI Experts Try (and Fail) to Design LLM Prompts," Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems, 2023.

[5] L. Reynolds and K. McDonell, "Prompt Programming for Large Language Models: Beyond the Few-Shot Paradigm," Extended Abstracts of the 2021 CHI Conference, 2021.

[6] Panopto, "Workplace Knowledge and Productivity Report," 2018. Available: https://www.panopto.com/blog/new-study-workplace-knowledge-productivity/

[7] IDC, "The Knowledge Worker's Day: Finding and Re-Creating Information," in The High Cost of Not Finding Information, IDC White Paper, 2021. Reference: https://www.proprofskb.com/blog/workforce-spend-much-time-searching-information/

[8] SQ Magazine, "Prompt Engineering Statistics," 2025. Available: https://sqmagazine.co.uk/prompt-engineering-statistics/

[9] S. Petrucci, "How to Write Effective ChatGPT Prompts: A Data-Driven Practical Guide," Keep My Prompts Blog, 2025. Available: https://www.keepmyprompts.com/blog/en/how-to-write-effective-chatgpt-prompts

[10] J.E. Daghfous, N. Belkhodja, L.C. Angell, "Knowledge Loss Induced by Organizational Member Turnover: A Review of Empirical Literature," The Learning Organization, vol. 30, no. 3, 2023. DOI: 10.1108/TLO-09-2022-0107

[11] Gallup, "This Fixable Problem Costs U.S. Businesses $1 Trillion," 2019. Reference: https://www.iteratorshq.com/blog/cost-of-organizational-knowledge-loss-and-countermeasures/

[12] Inc., "The Cost and Consequence of Institutional Memory Drain," 2023. Available: https://www.inc.com/bethmaser/the-cost-and-consequence-of-institutional-memory-drain/91178504

[13] F. Dell'Acqua et al., "Navigating the Jagged Technological Frontier: Field Experimental Evidence of the Effects of AI on Knowledge Worker Productivity and Quality," Science, vol. 381, 2023. DOI: 10.1126/science.adh2586