Introduction

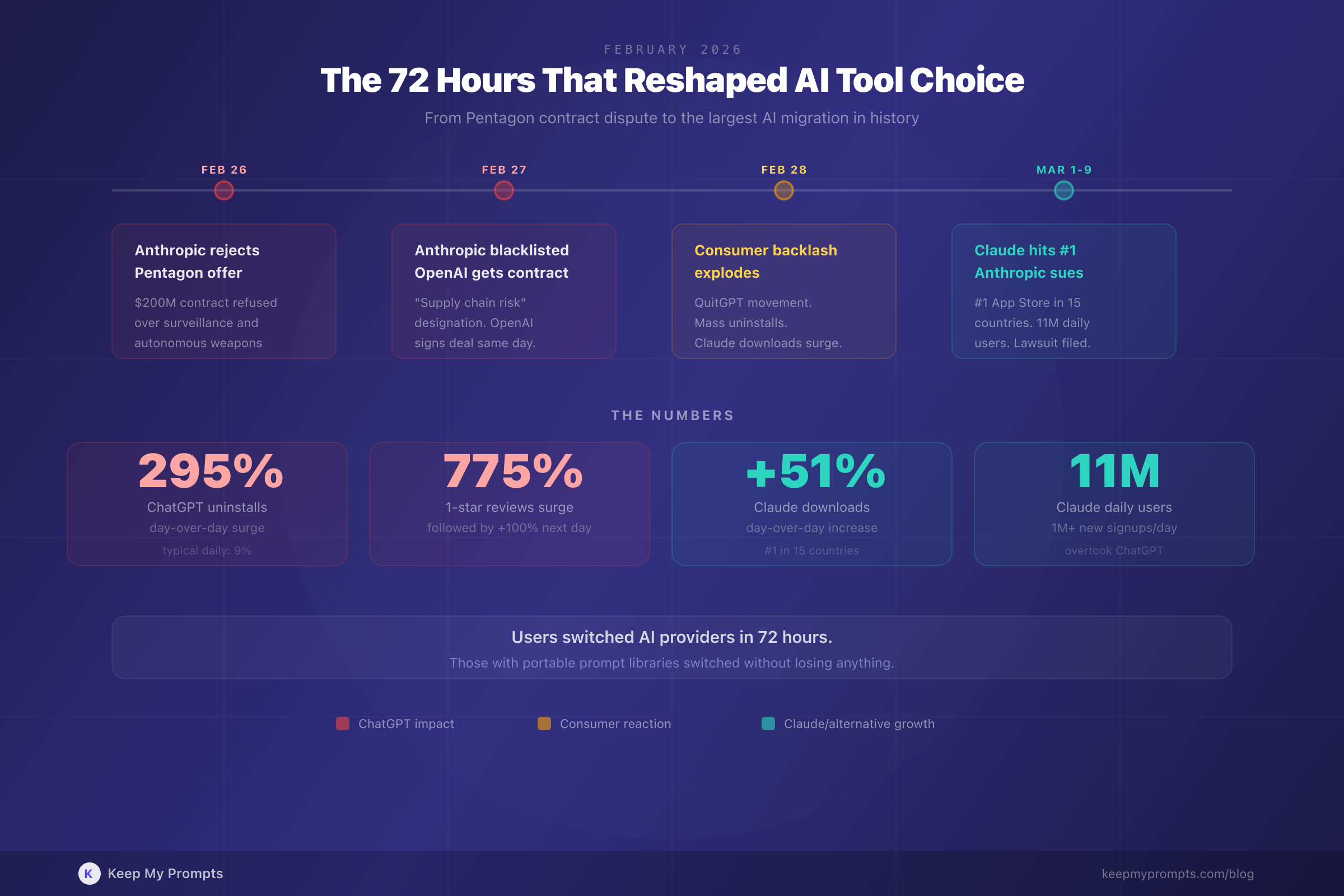

On February 27, 2026, Anthropic rejected the Pentagon's final offer for a $200 million AI contract, citing concerns about mass surveillance and autonomous weapons [1]. Hours later, OpenAI announced its own deal with the Department of Defense [2]. Within 48 hours, ChatGPT uninstalls surged 295% [3], Claude hit #1 on the U.S. App Store [4], and over 1.5 million users joined the QuitGPT boycott movement [5].

This is not an article about who was right. It is an article about what happened next, and what it means for professionals who depend on AI tools every day.

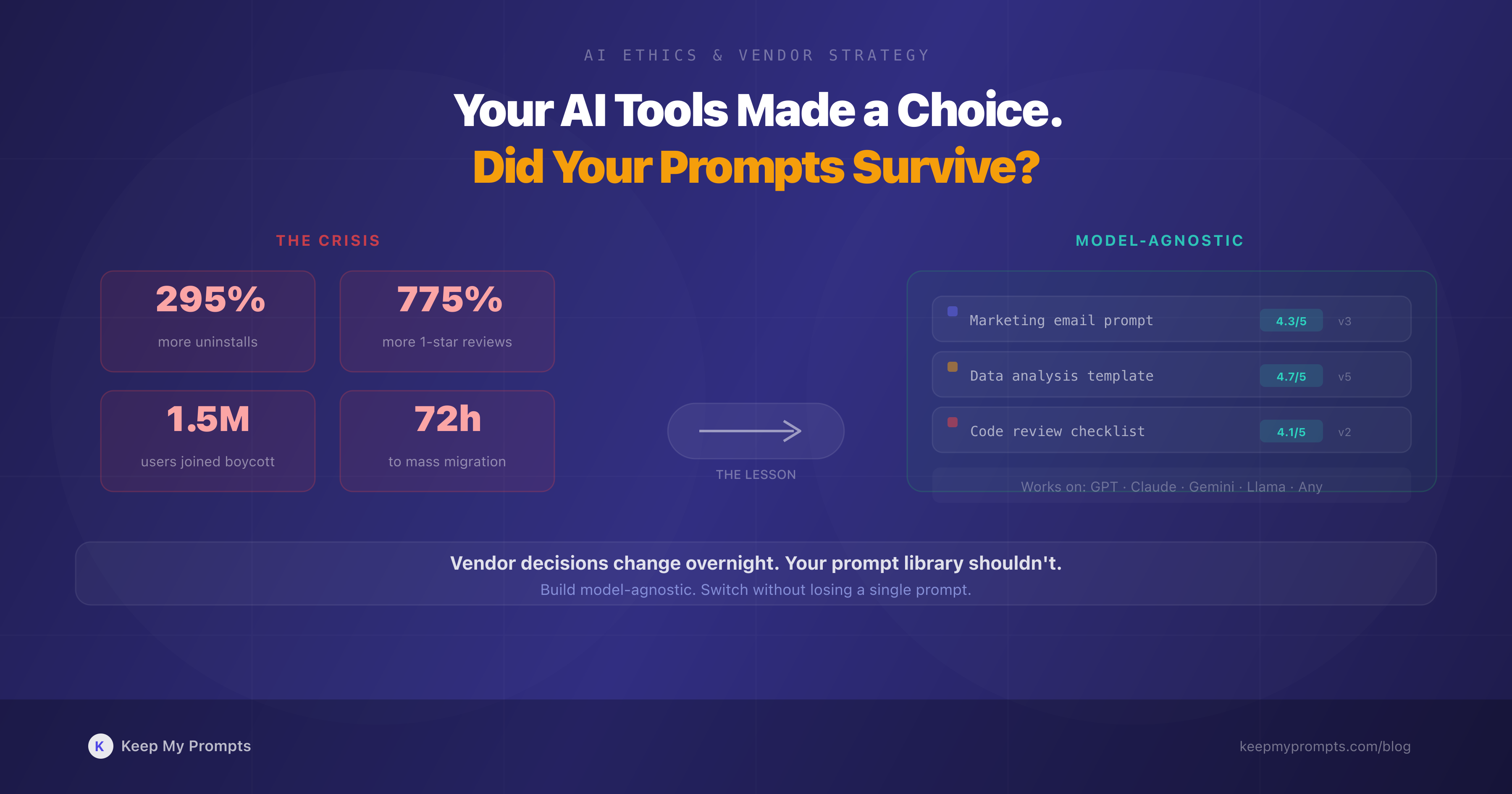

The lesson is not political. It is operational: if your entire workflow depends on a single AI vendor, you are one corporate decision away from a forced migration. The professionals who navigated the February crisis without disruption were those who had already built model-agnostic prompt libraries. Everyone else scrambled.

1. What Actually Happened: A Timeline

1.1 The Contract Dispute

The Pentagon originally chose Anthropic for a $200 million AI deployment in classified military environments. The dispute centered on usage restrictions: Anthropic insisted on contractual prohibitions against mass domestic surveillance and fully autonomous weapons systems. Defense Secretary Pete Hegseth gave Anthropic an ultimatum: allow the model to be used "for all lawful purposes" or lose the contract [1].

Anthropic's CEO Dario Amodei refused: "We cannot in good conscience accede to their request" [1]. The administration responded by designating Anthropic a "supply chain risk," a classification typically reserved for companies connected to foreign adversaries, and ordered federal agencies and military contractors to halt business with the company [6].

1.2 The Market Response

OpenAI moved quickly, securing a Pentagon contract with contractual and technical safeguards similar to what Anthropic had requested [2]. CEO Sam Altman later admitted the announcement was rushed: "It just looked opportunistic and sloppy" [7].

The consumer response was immediate and measurable:

- ChatGPT U.S. app uninstalls jumped 295% day-over-day on February 28 (compared to a typical 9% daily fluctuation) [3]

- 1-star reviews for ChatGPT surged 775% [3]

- Claude downloads increased 51% day-over-day [4]

- Claude reached #1 on the U.S. App Store and ranked as the top free app in 15 countries [4][8]

- Claude surpassed 11 million daily active users, with over 1 million daily signups [8]

1.3 The Legal Aftermath

On March 9, Anthropic sued the Department of Defense and other federal agencies over the supply chain risk designation, arguing it could jeopardize "hundreds of millions of dollars" in revenue [9]. The case remains pending.

2. Why This Matters for Every AI Professional

2.1 The Vendor Dependency Problem

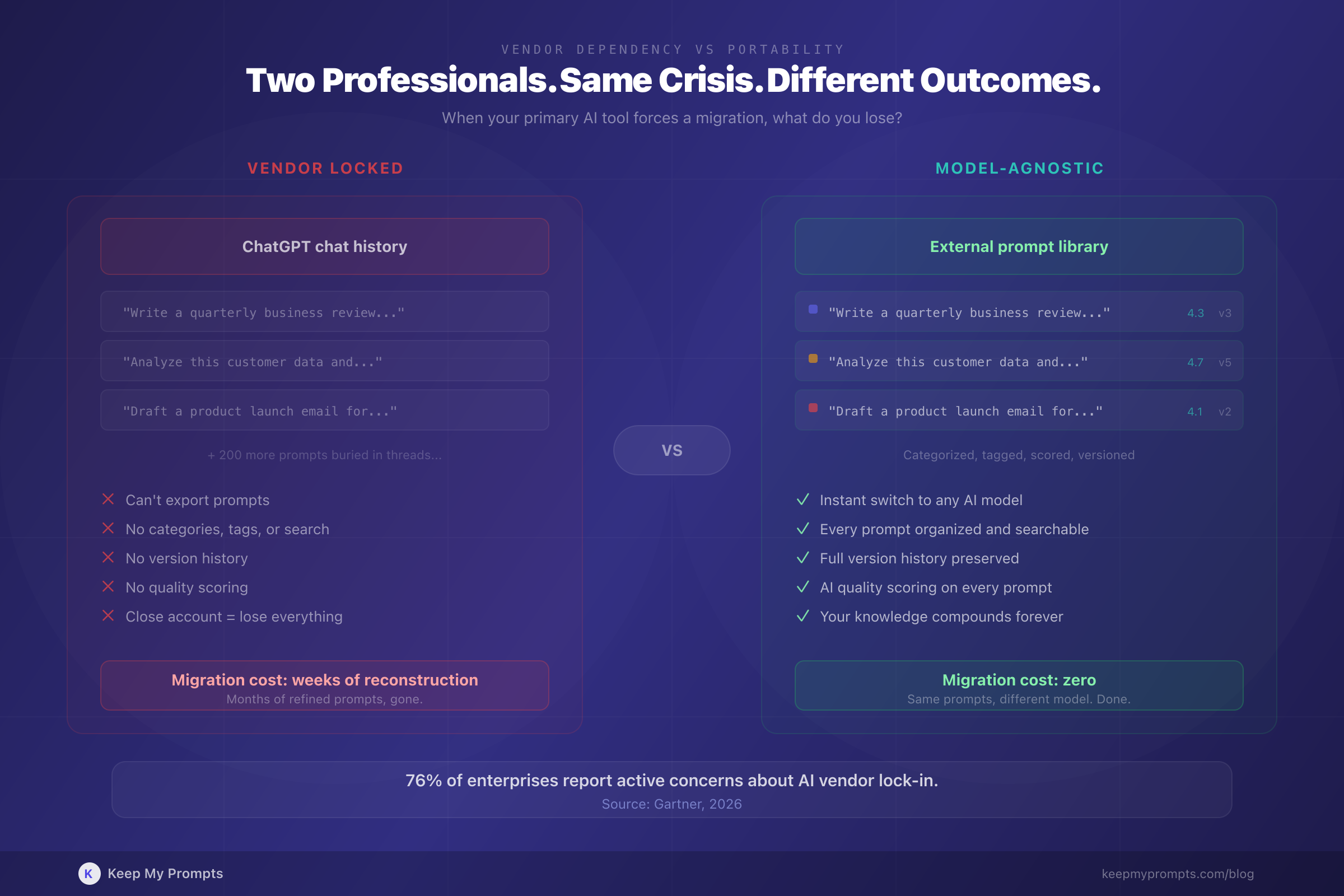

Most AI users do not think about vendor risk. They find a tool that works, build their workflows around it, and accumulate months or years of prompts, conversation histories, and institutional knowledge inside a single platform.

Then something changes. A pricing restructure. A policy shift. A corporate decision that conflicts with your values or your company's compliance requirements. Suddenly, the platform you built on is the platform you need to leave.

The February 2026 crisis made this concrete. Professionals who had invested heavily in ChatGPT-specific workflows faced a choice: stay with a vendor whose decisions they disagreed with, or start over somewhere else. Neither option is good when your prompts live exclusively inside one tool's chat history.

2.2 The Numbers Behind the Shift

The scale of the ChatGPT exodus was unprecedented for an AI tool. But the pattern is not new. Research from Gartner found that 76% of global enterprises report active concerns about AI vendor lock-in [10]. The February crisis validated those concerns in the consumer market.

What is new is the speed. It took less than 72 hours for Claude to go from a well-regarded alternative to the #1 app in the United States. Users did not gradually explore options. They switched en masse, in a matter of days, driven by a single news cycle.

This speed has implications. If you need to switch AI providers in 72 hours, you need your prompts to be portable. Chat histories are not portable. Bookmarked messages are not portable. Screenshots are not portable. A structured, categorized, tagged prompt library is.

2.3 Ethical Alignment as a Business Factor

The QuitGPT movement demonstrated something that the AI industry had not previously experienced at scale: users making tool choices based on vendor ethics, not just product quality.

This is already common in other industries. Consumers choose brands based on environmental practices. Enterprises select vendors based on data handling policies. The February crisis established that AI tool selection follows the same pattern.

For professionals, this means evaluating AI vendors on dimensions beyond performance:

- Data policies: Where is your data stored? Who can access it? Can it be used for training?

- Usage restrictions: What is the vendor willing to allow their technology to be used for?

- Transparency: How does the vendor communicate policy changes?

- Portability: Can you export your data and workflows if you need to leave?

None of these questions have correct universal answers. Different professionals and organizations will weigh them differently. But the February crisis proved that ignoring them entirely is a risk.

3. The Model-Agnostic Imperative

3.1 What Model-Agnostic Means in Practice

A model-agnostic approach to AI does not mean avoiding preferences. It means structuring your workflows so that your accumulated knowledge, your prompts, templates, context patterns, and optimization history, is not locked inside any single vendor's ecosystem.

In practical terms, this means:

- Storing prompts externally, not in chat histories

- Writing prompts that work across models, not prompts that rely on vendor-specific features

- Categorizing by function, not by tool (e.g., "content marketing prompts" not "my ChatGPT prompts")

- Versioning prompts so you can track what works on which model

- Scoring prompts objectively, using criteria that apply regardless of which model processes them

The professionals who had built this infrastructure before February 2026 switched from ChatGPT to Claude (or Gemini, or Llama) without losing a single prompt. Their transition cost was near zero.

3.2 The Enterprise Perspective

Enterprise AI strategy increasingly reflects this reality. Research shows that organizations are moving toward model-agnostic orchestration layers that enable multi-vendor integration [10]. Companies like Cursor built their competitive advantage specifically on being model-agnostic, allowing developers to adopt frontier models the moment they launched, rather than being limited by a single partner's choices [10].

UiPath positions itself as "the Switzerland of AI," partnering simultaneously with OpenAI, Google, and Anthropic [10]. The logic is straightforward: no single AI vendor will maintain permanent superiority, and the cost of switching should be as close to zero as possible.

Individual professionals face the same calculus at a smaller scale. The prompts you write today may work best on GPT. Tomorrow's breakthrough model might be Claude, Gemini, or something that does not exist yet. Your prompt library should outlive any single model generation.

3.3 Portability Is Not Just Technical

The February crisis highlighted a dimension of portability that goes beyond file formats and export features. Portability is also about cognitive independence: the ability to think about your AI interactions in model-neutral terms.

When you write a prompt using structural frameworks like TCOF (Task, Context, Output, Format), you are writing a prompt that works on any model. When you evaluate a prompt against six quality criteria (clarity, context, TCOF alignment, role prompting, chain-of-thought, few-shot examples), you are applying standards that transcend vendor boundaries.

This is the real advantage of structured prompt management. It is not just about having a backup plan. It is about building skills and knowledge that compound regardless of which AI tool you use.

4. How to Build Vendor-Resilient AI Workflows

4.1 Audit Your Current Dependency

Start by answering one question: if your primary AI tool disappeared tomorrow, how much would you lose?

If the answer involves months of prompts locked in chat histories, bookmarked conversations you cannot export, or workflows that depend on vendor-specific features, you have identified the problem.

4.2 Externalize Your Best Prompts

Every prompt that produces consistently good results deserves to exist outside the platform where you first wrote it. Copy it to an external library. Add context: what it does, when to use it, what model it was optimized for. Tag it by domain and function. This takes minutes per prompt and pays dividends indefinitely.

A well-organized prompt library is not just a backup. It is a performance multiplier. When your best prompts are organized, tagged, and searchable, you stop rewriting the same instructions from memory and start iterating on proven foundations.

4.3 Version and Score Across Models

The same prompt performs differently on different models. Version tracking lets you maintain model-specific variants while preserving the core structure. Objective scoring tells you which variant performs best on which model, turning subjective impressions into actionable data.

This is particularly valuable during transitions. When you move from one AI provider to another, scored and versioned prompts give you a systematic migration path: test your highest-value prompts first, adjust where needed, and track the results.

4.4 Adopt Model-Neutral Frameworks

Write prompts using structural principles that apply universally. The TCOF framework, chain-of-thought techniques, and context engineering practices work on every major language model. Prompts built on these foundations are inherently portable.

4.5 Evaluate Vendors Holistically

Performance benchmarks matter, but they are not the only criteria. When selecting or evaluating AI vendors, consider:

- Data privacy policies and their track record of enforcement

- Export capabilities for your data and interaction history

- API stability and backward compatibility

- Corporate governance and decision-making transparency

- Community and ecosystem health

The February crisis showed that vendor decisions you never anticipated can force a migration. Choosing vendors that score well on portability and transparency reduces the cost of future transitions, whether those transitions are driven by ethics, performance, pricing, or strategy.

Conclusion

The OpenAI-Pentagon episode was the AI industry's first major vendor ethics crisis at consumer scale. It will not be the last. As AI tools become more deeply integrated into professional workflows, the decisions that AI companies make about partnerships, data policies, and acceptable use will increasingly affect the people who depend on their products.

The professionals who weathered February 2026 without disruption shared one characteristic: they had decoupled their prompt knowledge from any single platform. Their prompts were stored externally, organized by function, tagged with metadata, versioned across models, and scored against objective criteria. When they needed to switch, they switched. The prompts traveled with them.

This is not about predicting the next controversy. It is about building workflows that are resilient to any change, whether that change is a pricing restructure, a policy shift, a model discontinuation, or an ethical decision you did not see coming.

Your prompts are your AI knowledge base. They represent months or years of learning what works, refined through iteration and testing. They deserve better than a chat history that disappears when you close your account.

Build your model-agnostic prompt library. It is the one investment that compounds regardless of what any single AI company decides to do next.

References

[1] CNN Business. "Anthropic rejects latest Pentagon offer: 'We cannot in good conscience accede to their request.'" February 26, 2026. https://www.cnn.com/2026/02/26/tech/anthropic-rejects-pentagon-offer

[2] NPR. "OpenAI announces Pentagon deal after Trump bans Anthropic." February 27, 2026. https://www.npr.org/2026/02/27/nx-s1-5729118/trump-anthropic-pentagon-openai-ai-weapons-ban

[3] TechCrunch. "ChatGPT uninstalls surged by 295% after DoD deal." March 2, 2026. https://techcrunch.com/2026/03/02/chatgpt-uninstalls-surged-by-295-after-dod-deal/

[4] Axios. "Anthropic got blacklisted by the Pentagon. Then Claude hit No. 1 in the app store." March 1, 2026. https://www.axios.com/2026/03/01/anthropic-claude-chatgpt-app-downloads-pentagon

[5] Medium. "1.5 Million People Just Quit ChatGPT. Here's the Story Behind the Biggest AI Revolt in History." March 2026.

[6] CNBC. "Trump admin blacklists Anthropic as AI firm refuses Pentagon demands." February 27, 2026. https://www.cnbc.com/2026/02/27/trump-anthropic-ai-pentagon.html

[7] CNBC. "OpenAI's Altman admits defense deal 'looked opportunistic and sloppy' amid backlash." March 3, 2026. https://www.cnbc.com/2026/03/03/openai-sam-altman-pentagon-deal-amended-surveillance-limits.html

[8] Android Headlines. "Claude Hit 11 Million Daily Users in 2026, Overtaking ChatGPT in App Stores." March 2026. https://www.androidheadlines.com/2026/03/claude-11-million-daily-users-2026-chatgpt.html

[9] CNBC. "Anthropic sues Trump administration over Pentagon blacklist." March 9, 2026. https://www.cnbc.com/2026/03/09/anthropic-trump-claude-ai-supply-chain-risk.html

[10] InformationWeek. "2026 Enterprise AI Predictions: Fragmentation, Commodification, and the Agent Push Facing CIOs." 2026. https://www.informationweek.com/machine-learning-ai/2026-enterprise-ai-predictions-fragmentation-commodification-and-the-agent-push-facing-cios