Introduction

In early 2024, IEEE Spectrum published an article with a provocative title: "AI Prompt Engineering Is Dead" [1]. The piece argued that language models could optimize their own prompts better than humans, casting doubt on an entire emerging discipline. A year later, Andrej Karpathy and Shopify CEO Tobi Lutke popularized a new term: "context engineering" [2][3]. By February 2026, a peer-reviewed paper comprising 9,649 experiments confirmed what practitioners had begun to suspect: the quality of context surrounding a prompt matters more than the prompt itself [4].

The narrative is compelling. It is also, in important ways, misleading.

This article examines the relationship between prompt engineering and context engineering with the specificity that the debate deserves. We will look at what context engineering actually means, what the research shows, why the "prompt engineering is dead" framing misrepresents the data, and what practitioners should do about it. The answer, as with most either/or debates in technology, is not one or the other. It is both, in the right proportions, with the right infrastructure.

1. What Context Engineering Actually Is

1.1 A Working Definition

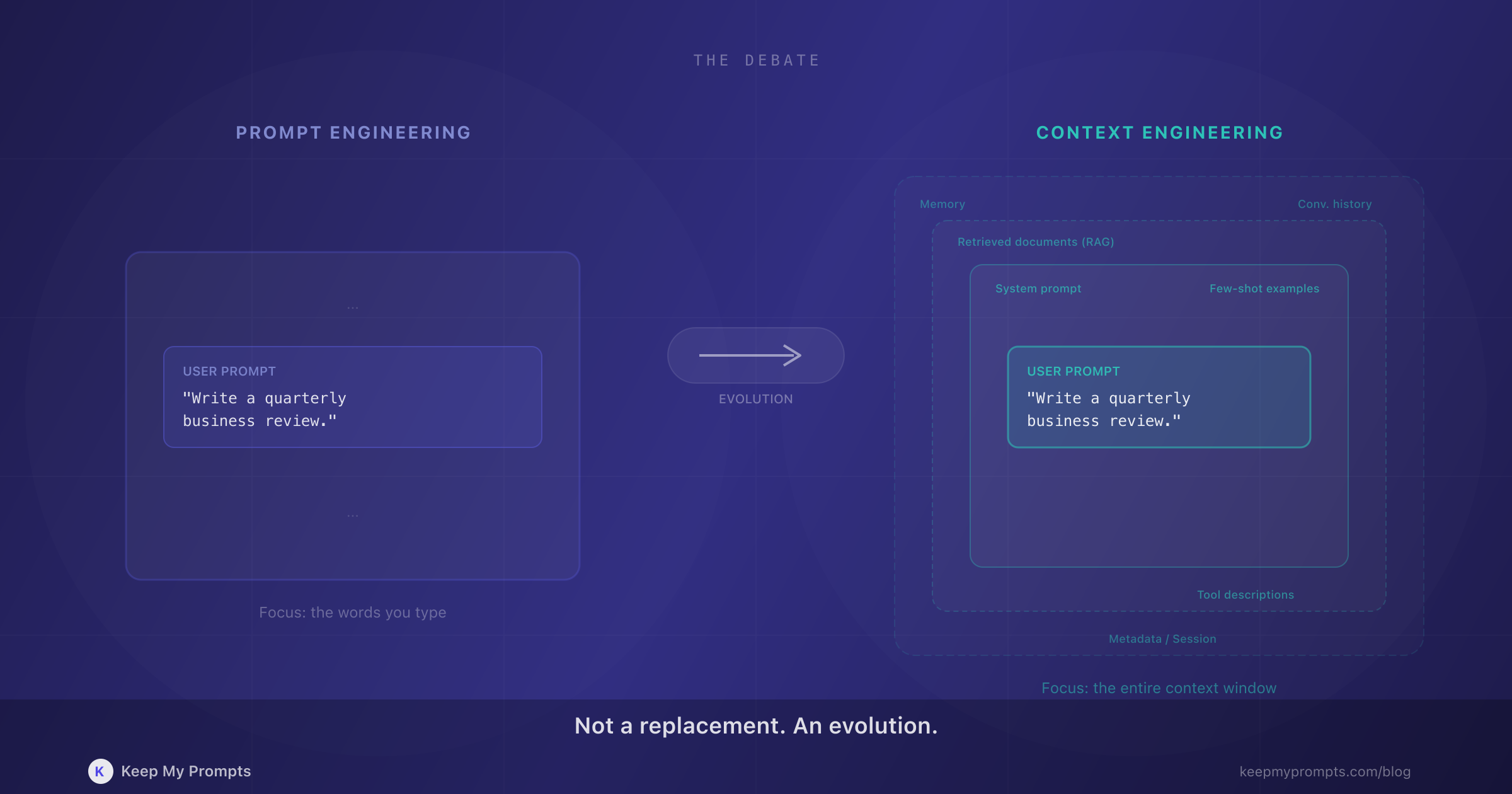

Context engineering is the practice of designing all information that a language model receives, not just the user-facing prompt. This includes system prompts, conversation history, retrieved documents (RAG), tool descriptions, memory stores, few-shot examples, and any metadata that shapes the model's behavior.

Karpathy's definition captures it well: "the delicate art and science of filling the context window with just the right information for the next step" [2]. Lutke framed it from the practitioner's perspective: "the art of providing all the context for the task to be plausibly solvable by the LLM" [3].

The distinction from prompt engineering is one of scope. Prompt engineering focuses on the user-facing input: the instruction, the question, the task description. Context engineering encompasses everything in the context window, including elements the end user never sees or controls directly.

1.2 The Components of Context

A useful way to understand context engineering is to inventory what goes into a modern LLM context window:

| Component | Example | Who Controls It |

|---|---|---|

| System prompt | Role definition, behavioral constraints | Developer |

| User prompt | The actual task or question | User |

| Conversation history | Previous messages in the session | System |

| Retrieved documents | RAG results, knowledge base excerpts | System (retrieval pipeline) |

| Tool descriptions | Available APIs, function signatures | Developer |

| Few-shot examples | Input/output pairs demonstrating desired behavior | Developer or user |

| Memory | User preferences, past interactions | System (memory layer) |

| Metadata | Timestamps, user role, session type | System |

Prompt engineering, in its traditional definition, concerns primarily one row of this table: the user prompt. Context engineering concerns all eight.

1.3 The 9,649-Experiment Paper

In February 2026, Damon McMillan published "Structured Context Engineering for File-Native Agentic Systems," the first large-scale empirical study of how context structure affects LLM agent performance [4]. The study tested 11 models across 4 formats (YAML, Markdown, JSON, TOON) with schemas ranging from 10 to 10,000 tables.

The key finding: for frontier-tier models (Claude, GPT, Gemini), file-based context retrieval improved accuracy by 2.7% (p=0.029). However, the results were mixed for open-source models (aggregate -7.7%, p<0.001). The implication is significant: context structure matters, but its impact depends on the model's capacity to leverage structured information.

What the paper does not say, despite how it has been cited in popular articles, is that prompts do not matter. The experiments held prompt quality constant to isolate context effects. The finding is that context structure adds value on top of good prompts, not that it replaces them.

2. Why the "Prompt Engineering Is Dead" Claim Is Misleading

2.1 What the Critics Actually Argue

The IEEE Spectrum article [1] made a specific claim: that AI models can optimize their own prompts, sometimes producing instructions "so bizarre, no human is likely to have ever come up with them." The argument was about automated prompt optimization at scale, not about whether humans should stop thinking carefully about their instructions.

The Stanford research on Verbalized Sampling [5] made an even narrower claim: that alignment training causes "mode collapse" in model outputs, and that meta-prompts can recover the full probability distribution. The researchers' own conclusion was explicit: "prompt engineering isn't dead; it's finally becoming a science."

Neither paper argues that writing clear, structured, contextual instructions is unnecessary. Both argue that the field is maturing beyond trial-and-error tricks.

2.2 The Enterprise vs. Individual Distinction

The context engineering conversation is driven primarily by enterprise AI teams building agent systems, RAG pipelines, and multi-step workflows. LangChain's 2025 State of Agent Engineering report found that 57% of organizations now have AI agents in production, with 32% citing quality as their top barrier [6]. In that world, context engineering is absolutely critical: the system prompt, the retrieval pipeline, the tool descriptions, and the memory architecture determine whether an agent succeeds or fails.

But most AI users are not building agent systems. They are writing prompts in ChatGPT, Claude, or Gemini to draft emails, analyze data, generate content, or solve problems. For these users, the prompt IS the primary context. There is no RAG pipeline, no tool orchestration, no memory layer. There is a person, a text box, and a language model.

Telling these users that "prompt engineering is dead" is like telling a novelist that writing sentences does not matter because publishing houses have editorial workflows.

2.3 The False Dichotomy

The framing of context engineering "replacing" prompt engineering creates a false binary. Consider what context engineering actually requires:

- System prompts are prompts. They need the same clarity, specificity, and structure as user prompts.

- RAG retrieval depends on how well queries are formulated, which is a prompt engineering problem.

- Tool descriptions are instructions to the model, written in natural language, following prompt design principles.

- Few-shot examples are a core prompt engineering technique embedded in the context.

Context engineering does not eliminate the need for good prompts. It multiplies the number of places where good prompt design is required. A poorly written system prompt inside a sophisticated RAG pipeline will produce poor results regardless of how well the retrieval works.

3. The Real Relationship Between Context and Prompts

3.1 Context Amplifies Prompt Quality

The relationship between context and prompts is multiplicative, not additive. A well-crafted prompt in a rich context produces dramatically better results than either element alone. A mediocre prompt in excellent context still underperforms a strong prompt in the same context.

Consider a practical example. You want an AI to draft a quarterly business review.

Poor prompt, no context:

Write a quarterly business review.

Good prompt, no context:

Write a Q4 2025 business review for a B2B SaaS company. Include sections for revenue performance, customer metrics, product milestones, and strategic outlook. Use a professional but direct tone. Keep it under 1,500 words.

Good prompt, rich context:

Write a Q4 2025 business review for a B2B SaaS company. Include sections for revenue performance, customer metrics, product milestones, and strategic outlook. Use a professional but direct tone. Keep it under 1,500 words.

Context: ARR grew from 5.1M. Net revenue retention is 118%. We launched two major features (AI assistant and team workspaces). Churn decreased from 3.2% to 2.1%. We hired 12 people, bringing the team to 47. The board wants to see a path to $10M ARR by Q4 2026.

The third version is not better because context replaced the prompt. It is better because a structured prompt (following TCOF principles: Task, Context, Output, Format) combined with relevant context data to produce a result that is specific, grounded, and actionable.

3.2 The Library Analogy

Context engineering and prompt engineering relate to each other like a library and the books it contains. A well-organized library (context) with poorly written books (prompts) is not useful. A collection of excellent books dumped in a pile without cataloging (good prompts, no context structure) is frustrating to navigate. Excellence requires both: well-written content organized in a retrievable system.

This analogy extends to how professionals manage their AI interactions over time. The prompts you write are the books. The categories you organize them into, the tags you assign, the notes you add, the versions you track: that is the library architecture. That is context engineering at the individual level.

3.3 Six Criteria Still Apply

Whether you are writing a user prompt or designing a system prompt for an agent pipeline, the same quality dimensions matter. Research consistently identifies six criteria that separate effective prompts from ineffective ones: clarity, context richness, TCOF alignment, role prompting, chain-of-thought structure, and few-shot examples.

Context engineering does not introduce new quality criteria. It introduces new places where those criteria must be applied. A system prompt scored on these six dimensions will perform better than one written without structural awareness, just as a user prompt does.

4. How to Practice Context Engineering Today

You do not need to build an agent framework or deploy a RAG pipeline to benefit from context engineering principles. The core insight, that organized, well-structured information around your prompts improves outcomes, applies at every scale. Here are five practices that work whether you are an individual practitioner or part of a team.

4.1 Organize Prompts by Domain and Function

When you group prompts into categories (content marketing, data analysis, code review, customer communications), you are building context domains. Each category represents a cluster of related knowledge, shared assumptions, and common patterns. The next time you work in that domain, the surrounding prompts serve as reference context that informs and improves your new prompt.

This is why a well-organized prompt library outperforms a scattered collection of saved messages. Organization is not administrative overhead; it is context architecture.

4.2 Add Metadata That Captures Context

Tags, notes, and descriptions are not busywork. They are contextual metadata that makes your prompts retrievable and reusable. A prompt tagged with #onboarding, #enterprise, #formal-tone carries context that the prompt text alone does not. Six months from now, that metadata is the difference between finding the right prompt in seconds and spending ten minutes reconstructing it from memory.

The research supports this. Knowledge management studies consistently show that metadata-rich information systems outperform those that rely on full-text search alone [7]. The same principle applies to prompt collections.

4.3 Version Your Prompts as Context Evolves

AI models change. Business requirements change. What worked for GPT-4 in January may need adjustment for Claude in March. Treating prompts as static artifacts is the equivalent of maintaining a codebase without version control.

Version history serves two purposes in context engineering. First, it preserves working configurations so you can revert if an update degrades performance. Second, it documents how your understanding of the task has evolved, which is itself a form of institutional context. The progression from v1 to v5 of a prompt tells a story about what you learned and why you changed your approach.

4.4 Score and Refine Systematically

Subjective evaluation ("this prompt seems to work") does not scale. Quantitative scoring across specific criteria provides actionable diagnostics. A prompt scoring 2.1 on context richness does not need a rewrite; it needs more background information. A prompt scoring 4.8 on clarity but 1.5 on few-shot examples has a specific, addressable gap.

Systematic scoring transforms prompt improvement from an art into an engineering practice, which is precisely the trajectory that the context engineering movement advocates.

4.5 Build a Knowledge Base, Not a Chat History

The fundamental shift in context engineering is from ephemeral to persistent. Chat histories disappear. Bookmarked messages lose their context. Screenshots are unsearchable. A dedicated prompt management system, where prompts are stored, categorized, tagged, versioned, and scored, is a personal knowledge base that compounds in value over time.

This is what separates practitioners who improve steadily from those who plateau: the infrastructure for accumulating and organizing what they learn. Every prompt you save with proper metadata is a building block for future context. Every version you track is a record of iteration. Every category you define is a context boundary that helps you think more clearly about what information belongs where.

5. What This Means for the Future

5.1 From Individual Prompts to Context-Aware Systems

The trajectory is clear. Individual prompts are evolving into prompt libraries, which are evolving into context-aware systems. Model Context Protocol (MCP), introduced by Anthropic and adopted across the industry, provides the infrastructure for models to access external context sources dynamically [8]. The professionals who have organized their prompts into structured, categorized, metadata-rich collections are building the foundation that these systems will leverage.

5.2 The Skills That Transfer

If you have learned to write clear, structured prompts following frameworks like TCOF, you have already developed the core skill that context engineering demands. The ability to specify a task precisely, provide relevant context, define expected output, and structure the format: these are not prompt engineering skills that context engineering makes obsolete. They are the fundamental competencies that context engineering applies at larger scale.

5.3 The Compounding Advantage

The professionals who will benefit most from the context engineering era are not those who abandon prompt engineering. They are those who have been building organized, versioned, scored prompt libraries all along. Because when context engineering tools and platforms mature, the raw material they need is exactly what a well-maintained prompt library provides: high-quality, categorized, metadata-rich, version-tracked prompts ready to be assembled into larger context architectures.

Conclusion

Context engineering is the natural evolution of prompt engineering, not its replacement. The skills are complementary, and the relationship is hierarchical: context engineering is the larger discipline that includes prompt engineering as a foundational component.

The "prompt engineering is dead" narrative is a useful provocation for enterprise AI teams who need to think beyond individual prompts. It is misleading for the millions of practitioners who interact with AI through text boxes every day and whose outcomes depend directly on how well they craft their instructions.

The practical path forward is clear. Write better prompts. Organize them by domain. Tag them with contextual metadata. Version them as your understanding evolves. Score them against objective criteria. Build a knowledge base that grows more useful over time.

That is context engineering. And it starts with a single, well-written prompt.

References

[1] Bhaskar, A. "AI Prompt Engineering Is Dead." IEEE Spectrum, March 2024. https://spectrum.ieee.org/prompt-engineering-is-dead

[2] Karpathy, A. Post on X (formerly Twitter), June 2025. "+1 for 'context engineering' over 'prompt engineering'. [...] context engineering is the delicate art and science of filling the context window with just the right information for the next step." https://x.com/karpathy/status/1937902205765607626

[3] Lutke, T. Post on X (formerly Twitter), June 2025. "I really like the term 'context engineering' over prompt engineering. It describes the core skill better: the art of providing all the context for the task to be plausibly solvable by the LLM." https://x.com/tobi/status/1935533422589399127

[4] McMillan, D. "Structured Context Engineering for File-Native Agentic Systems: Evaluating Schema Accuracy, Format Effectiveness, and Multi-File Navigation at Scale." arXiv:2602.05447, February 2026. https://arxiv.org/abs/2602.05447

[5] Stanford Verbalized Sampling research, as reported in "RIP Prompt Engineering? Why Verbalized Sampling Changes Everything." Analytics Vidhya, October 2025. https://www.analyticsvidhya.com/blog/2025/10/verbalized-sampling/

[6] LangChain. "State of Agent Engineering 2025." Survey of 1,340 respondents, November-December 2025. https://www.langchain.com/state-of-agent-engineering

[7] Panopto. "Workplace Knowledge and Productivity Report." Study finding that knowledge workers spend 5.3 hours per week searching for information that already exists within their organization.

[8] Anthropic. "Model Context Protocol (MCP)." Open standard for connecting AI models to external data sources and tools. https://modelcontextprotocol.io